Create Your Own AI Girlfriend 😈

Chat with AI Luvr's today or make your own! Receive images, audio messages, and much more! 🔥

4.5 stars

For any platform in the NSFW space, user generated content moderation isn't just another item on a feature list—it's the bedrock your entire business is built on. It’s the gatekeeper that ensures the experience you promise your users is the one they actually get, all while staying on the right side of the law.

Get this right, and you build the kind of trust that keeps users coming back. Get it wrong, and you risk everything.

Why User Generated Content Moderation Is Non-Negotiable

This isn’t about censorship. It’s about curation. Think of your platform as an exclusive club. Your moderation strategy is both the velvet rope and the bouncer—it defines the vibe and makes sure everyone inside is there for the right reasons. Without it, the club descends into chaos, and the very people you built it for will head for the exits.

The Core Pillars of Moderation

A solid moderation system stands on a few critical pillars. If one of them wobbles, the whole structure is at risk.

- Protecting Your Brand: A single viral screenshot of something awful on your platform can tank your reputation overnight. Unmoderated content invites bad press, scares away users, and makes partners think twice.

- Staying Legally Compliant: The law isn't optional. Platforms are on the hook for preventing the spread of illegal content. Ignoring this can lead to crippling fines, lawsuits, or even getting shut down completely.

- Building User Safety and Trust: People need to feel safe to be themselves, especially in an adult-focused space. When users trust that you'll enforce your own rules, they stick around and engage more deeply.

- Fostering a Healthy Community: Good moderation weeds out the trolls, the spammers, and the harassers. This allows a positive, organic culture to grow, where users actually want to interact with each other.

A Proactive Competitive Advantage

In the crowded market of adult platforms, trust is your most valuable currency. Users have plenty of choices, and they will bail instantly from any app that feels unsafe, unmanaged, or just plain toxic.

A proactive, well-designed moderation system is more than just a defensive shield—it’s your single biggest competitive edge. It's what drives user retention, attracts advertisers, and secures your long-term profitability.

This guide will skip the abstract theories and get straight to the battle-tested strategies that separate the thriving platforms from the dead ones. We'll break down exactly how to build a robust moderation framework that becomes the engine for sustainable growth.

Defining the Rules of the Road for Your Platform

Your content policy is far more than a legal document you tuck away in a footer link. It’s the very soul of your community—a public promise about the kind of space you're building and what behavior is and isn't welcome. For an NSFW platform, this requires laser-like precision to draw a hard, bright line between creative freedom and genuinely harmful or illegal activity.

Think of it as setting the ground rules before a game starts. Everyone needs to know where the out-of-bounds lines are. When policies are vague or wishy-washy, you create gray areas that breed confusion, leading to inconsistent moderation, angry users, and serious legal headaches.

This clarity is the bedrock of any successful user-generated content moderation strategy. It gives your team, both the people and the algorithms, the confidence to act quickly, decisively, and fairly.

From Zero-Tolerance to Community Vibe

First things first, you need to sort your risks into different buckets. Start with the absolute, non-negotiable legal red lines, then move on to the community standards that shape your platform's specific culture. Not all rule-breaking is created equal, and your policy needs to reflect that reality.

This tiered approach is what lets you respond proportionally. Someone posting illegal content gets an instant, permanent ban and a report to the authorities—no questions asked. But someone who trips over a minor community guideline might just get a warning or a short timeout. This is how you build a system that feels fair and can actually scale.

The Unbreakable Rules: No Platform Gets a Pass

Some content is so toxic and illegal that it's a zero-tolerance issue, period. These aren't up for debate; they are the absolute first priority for any moderation system you build. For any platform operating in the adult space, building an ironclad defense against this material is job number one.

Your policy must be crystal clear and forceful in banning:

- Illegal Content: This is headlined by Child Sexual Abuse Material (CSAM). There is zero room for interpretation here. Finding it means immediate removal, account termination, and reporting to authorities like the National Center for Missing & Exploited Children (NCMEC).

- Exploitation and Non-Consensual Content: This covers any media showing violence, human trafficking, or sexual acts without the clear, enthusiastic consent of everyone involved. It also absolutely includes "revenge porn" or sharing any private, intimate media without permission.

- Promotion of Real-World Harm: Any content that encourages violence, self-harm, terrorism, or other dangerous acts has no home on a responsible platform.

A strong policy isn't about restricting people; it's about building a safe container for expression. By ruthlessly defining and enforcing these zero-tolerance rules, you protect your users, your reputation, and your entire business from falling off a cliff.

Shaping Your Platform-Specific Guidelines

Once you've walled off the illegal stuff, it's time to define the rules that shape the community you actually want. This is where you get to decide your platform's personality. These are the behaviors that, while not necessarily illegal, can poison the well if left unchecked.

This is where you build out your Core Risk Categories for NSFW Platform Policies. These definitions form the backbone of your daily moderation efforts and give your team clear instructions on how to handle nuanced situations.

Here’s a breakdown of the key categories you need to nail down:

| Risk Category | Definition | Examples of Prohibited Content |

|---|---|---|

| Harassment & Bullying | Targeted abuse, threats, or persistent unwanted contact aimed at an individual or group. | Repeatedly sending unsolicited messages, posting content to mock or shame another user, encouraging others to pile on. |

| Hate Speech | Attacks or dehumanizing language based on protected characteristics like race, religion, gender identity, or sexual orientation. | Slurs, violent threats against a protected group, content promoting discriminatory ideologies. |

| Doxxing & Privacy | Sharing someone's private, personally identifiable information (PII) without their explicit consent. | Posting a user's real name, home address, phone number, workplace, or private photos. |

| Spam & Scams | Deceptive or repetitive, low-quality content intended to mislead, defraud, or disrupt the user experience. | Phishing links, fraudulent promotions, bot accounts posting repetitive comments, impersonating platform staff. |

Defining these rules isn't a one-and-done task; it’s a serious investment in the long-term health of your platform. And it's a massive challenge. The global content moderation market was valued at $12.48 billion in 2025 and is expected to rocket to $42.36 billion by 2035, which shows you just how vital—and complex—this work has become.

When you write these rules, get specific. Don't just say "no harassment." Give concrete examples of what your platform actually considers harassment. This level of detail helps users police themselves and gives your moderators a clear playbook. For a real-world example of what this looks like in practice, you can see how we document these rules in our blocked content policies. This approach cuts through the ambiguity and makes sure everyone understands the rules of the road.

Building Your First Line of Defense with AI Moderation

When you’re running a platform with thousands, or even millions, of daily interactions, relying only on human reviewers is like trying to empty the ocean with a thimble. It’s not just inefficient; it’s flat-out impossible. This is where AI becomes your indispensable first line of defense in any serious user generated content moderation strategy.

Think of AI moderation as a digital sentry that never sleeps, eats, or takes a break. It stands guard 24/7, instantly scanning every new message, image, and user bio before it ever gets a chance to cause harm. The goal isn't to replace your expert human team but to supercharge their abilities.

By automatically catching the most obvious and severe policy violations, AI frees up your people to focus on what they do best: applying nuanced judgment to the complex, gray-area cases that truly demand a human touch. This hybrid approach isn’t just a good idea—it’s the gold standard for effective, scalable safety operations.

How AI Learns to See and Read

So, how does it actually work? At its heart, AI moderation uses sophisticated models trained on massive datasets to spot patterns tied to prohibited content. It’s not just hunting for a list of bad words; it’s learning to understand context, intent, and visual cues in a way that mimics human intuition, but at a blistering speed.

This has gotten incredibly advanced, moving far beyond checking a single piece of text or one image. The industry is all-in on multimodal approaches that analyze text, images, and even video together to get the full story. And it's a huge market—the text-based segment of content moderation services alone is expected to capture 45% of the market by 2035. You can discover more insights about these UGC trends and their impact on moderation for a deeper dive.

Three key pieces of tech form the backbone of this automated defense system.

- Natural Language Processing (NLP): This is what lets the AI understand human language. NLP models analyze text for signs of harassment, hate speech, spam, and solicitation. They go way beyond simple profanity filters to grasp the real meaning and intent behind a conversation.

- Computer Vision (CV): This tech gives the AI its "eyes." CV models can rip through millions of images and video frames in a flash to detect nudity, violence, weapons, or any other visual no-go’s defined in your policies.

- Advanced Classifiers: These are the brains of the whole operation. Modern embedding-based classifiers learn the subtle, intricate relationships between different types of content. This helps them identify tricky violations like sophisticated scams or coded language that would otherwise slip right through the cracks.

Putting AI Into Action on Your Platform

Getting AI moderation right means building a workflow that plays to its strengths: speed and scale. The system should act as a powerful initial filter, sorting all incoming content into different queues based on severity and the AI's own confidence score.

Your goal is a tiered response system. The most egregious, zero-tolerance content is blocked instantly and automatically, while borderline cases are flagged and routed to a specialized human review queue for a final decision.

This gives you a rapid response to the biggest threats while preventing the system from making overly aggressive mistakes on more ambiguous content. For instance, a roleplaying scenario on an NSFW AI platform might contain language that, taken out of context, could seem alarming. A well-tuned AI can learn that difference, preventing the kind of false positives that kill the user experience.

The Power of Automation in NSFW Contexts

For an adult platform, the stakes couldn't be higher. Automated systems are absolutely essential for proactively identifying and removing illegal content like CSAM, which requires an immediate, zero-tolerance response. They are also your frontline tool for enforcing age verification gates and preventing underage access.

This proactive stance is everything. By using AI effectively, you create a safer space where users feel protected and can engage freely within the rules you’ve set. This doesn’t just build trust; it ensures the long-term health and viability of your community. For platforms built around creative expression, like those exploring NSFW AI interactions, this automated safety net allows for creative freedom while maintaining a secure and compliant ecosystem.

Ultimately, a robust AI moderation system is the foundation of any healthy, thriving online community. It delivers the sheer scale needed to manage today’s content volumes and the speed required to act before harm can spread.

The Indispensable Role of Human Reviewers

While your AI systems are working tirelessly, acting as a massive, high-speed filter, they're still just tools built on patterns. They’re phenomenal at handling sheer volume, but they can't grasp intent, understand evolving slang, or tell the difference between genuine malice and edgy satire. This is where your human review team becomes the absolute cornerstone of your user generated content moderation.

These aren't just button-pushers. They are the ultimate arbiters of your community standards. A human moderator can see the subtle context in a roleplay scene that an algorithm might mistakenly flag as a real threat. It’s this human-in-the-loop workflow that elevates a blunt automated system into a truly intelligent and fair safety operation.

The goal is a seamless partnership. Let the AI catch the low-hanging fruit and obvious violations at scale, freeing up your human experts to focus on the messy, nuanced, and sensitive cases that require real judgment. This combination is the only way to get both speed and accuracy right.

Designing an Effective Review Workflow

To get the best out of your human team, you can't just throw a list of flagged content at them and hope for the best. They need a purpose-built workflow with tools that give them the full picture, empowering them to make consistent, policy-aligned decisions every single time.

A great workflow is all about one thing: context.

This means building review tools that don’t just show a single offending message or image in a vacuum. A moderator needs to see the surrounding conversation, the user's history, and any past infractions. Without that context, they’re flying blind. And that leads to bad calls, frustrated users, and a slow erosion of trust in your platform.

A well-oiled workflow should always include a few key things:

- Contextual Review Interfaces: Tools that show the flagged content right alongside relevant user data and conversational history.

- Clear Escalation Paths: A tiered system where frontline moderators handle the everyday stuff, while seasoned specialists tackle the really tough cases like doxxing or severe harassment.

- Policy Guides at Their Fingertips: Instant access to a searchable, example-rich knowledge base of your content policies. This ensures every decision is consistent and can be defended.

This kind of structure doesn't just make your decisions more accurate; it makes your team more efficient, letting them apply their expertise where it truly counts.

The most sophisticated AI in the world can't replace human empathy and contextual understanding. An effective human review team is the heart of a fair and trusted moderation system, ensuring your platform's rules are applied with wisdom, not just code.

The Psychological Demands of Moderation

Let's be blunt: the job of a content moderator is psychologically brutal. Day in and day out, they are exposed to the very worst of the web, from graphic violence to vitriolic abuse. For platforms in the NSFW space, this exposure can be especially intense and relentless.

Ignoring this reality isn't just unethical—it's a direct threat to your entire safety operation.

Moderator burnout is a massive risk. It leads to sloppy work, slower response times, and a revolving door of staff. A moderator who is emotionally and mentally spent simply can't make the sharp, nuanced judgments your platform depends on. Investing in their well-being isn't a perk; it's a core business necessity.

Robust support systems are non-negotiable. This must include:

- Professional Mental Health Support: Provide confidential access to counselors and therapists who are actually trained in handling trauma from online content exposure.

- Wellness Programs: Offer practical resources like mindfulness training, stress management workshops, and mandatory breaks away from the queue.

- Peer Support Groups: Create a safe space for moderators to connect with colleagues who truly understand the unique pressures of the job. It helps to know you're not alone.

By building a genuinely supportive and healthy environment, you protect your people and, in turn, protect the quality and long-term health of your user generated content moderation. A well-supported team is an accurate, resilient, and high-performing team—the true guardians of your community.

Navigating Privacy Compliance and User Rights

Great user generated content moderation is more than just a clean-up crew for bad content. It's a delicate dance between keeping your platform safe and respecting your users' privacy. In the adult space, this isn't just a best practice—it's absolutely mission-critical. One misstep with user data won't just break trust; it can bring down a hailstorm of legal and financial penalties that could easily shut you down for good.

You have to think of privacy as a foundational part of your design, not an afterthought. Every single piece of information you handle, whether it's a chat log or an ID for age verification, is a massive responsibility. You're making a promise to your users: we’ll keep you safe, and we’ll guard your data while we do it.

The Non-Negotiables of Legal Compliance

For any platform that deals with adult content, the first line of defense is rock-solid age verification. This is not optional. It’s a legal and ethical bright line you can't cross, designed to keep minors off your service. While methods can vary from simple self-declaration to more sophisticated checks, the goal is always the same: create a real barrier that protects vulnerable users and your business.

Beyond that gate, you're operating in a maze of data privacy laws. Think of regulations like Europe's GDPR (General Data Protection Regulation) or California's CCPA (California Consumer Privacy Act). These aren't just suggestions; they lay down strict, unbendable rules about how you collect, store, and manage personally identifiable information (PII).

These laws empower your users with specific rights, including:

- The Right to Access: Users have the right to ask for a complete copy of the data you have on them.

- The Right to Deletion: They can tell you to wipe their personal information, and you have to do it.

- The Right to Know: You must be crystal clear about what data you're collecting and exactly why you need it.

Getting this wrong can cost you millions in fines. Your entire operation—from your moderation queues to your record-keeping—has to be built from the ground up to meet these legal demands. To see how these principles look in practice, you can dive into our privacy policy, where we outline our own commitment to protecting user data.

Building Trust Through a Fair Appeals Process

Let's be honest: no moderation system is flawless. Not your AI, not your human team. Mistakes will inevitably happen. The difference between a platform people trust and one they despise is what happens next—when a user feels you got it wrong. A clear, easy-to-find, and genuinely fair appeals process is one of the most powerful tools you have for building a loyal community.

Giving users a way to challenge a moderation decision shows you respect them. It turns moderation from a cold, top-down order into a real conversation and proves you care more about fairness than just being right.

An appeals process is more than just good PR; it's also an incredible feedback mechanism. When you start tracking why people appeal, you get a direct look at where your policies are confusing or where your AI models are consistently messing up. That data is pure gold. It helps you tweak your guidelines and sharpen the accuracy of your entire user generated content moderation system over time.

Think of it this way. A user who gets banned with no explanation becomes a disgruntled ex-customer who will probably bad-mouth you everywhere. But a user who can appeal—even if you end up sticking with the original decision—was at least given a fair shot. That simple act of procedural justice can make all the difference in defending your reputation and growing a community that feels seen and respected.

Your Practical UGC Moderation Implementation Plan

Alright, let's move from theory to action. Building a real-world safety system isn’t just a technical challenge; it’s about creating a living, breathing framework for your community. This is your roadmap for taking all these concepts and turning them into a practical, day-to-day operation that protects users and your platform. Think of this as the ground-up blueprint for a safer, more engaging space.

First things first: you need a constitution. Before you even think about software or hiring, you have to write your community guidelines. These rules are your foundation. They need to be crystal clear about what’s okay and what’s absolutely not, leaving zero gray area, especially for those zero-tolerance violations. Every single decision your AI and your human team makes will flow directly from this document.

Building Your Moderation Engine

With your rulebook in hand, it’s time to build the machinery. This is where you bring together the tech and the people who will enforce those rules. The goal is to create a slick pipeline where automation does the heavy lifting, flagging potential issues at scale, while your human experts handle the judgment calls that a machine just can't make.

Here’s what that build-out looks like:

- Pick Your AI Tools: You need to partner with AI providers who get the nuances of NSFW content. Look for specialists in NLP and computer vision whose models can be fine-tuned specifically for the kind of conversations and media happening on your platform.

- Design Your Human Review Queues: Don't just dump all flagged content into one big bucket. Create specialized queues. One for harassment, one for spam, and a high-priority one for severe, illegal content. This gets the right eyes on the right problem, fast.

- Establish Clear Escalation Paths: Your frontline moderators need a lifeline. Create a simple, multi-tiered system so they can instantly escalate tricky or disturbing cases to a senior reviewer, your legal team, or even law enforcement when necessary.

Launching and Optimizing Your System

Once the system is built, the real work begins. This phase is all about execution, being open with your users, and constantly getting better. A successful launch isn't just about flipping the switch; it's about creating feedback loops that make your moderation smarter every single day. And trust me, this investment pays off. Campaigns using authentic user content see 50% higher engagement than those with just brand-created posts, which shows that users flock to platforms they trust. You can find more stats on UGC's business impact to back this up.

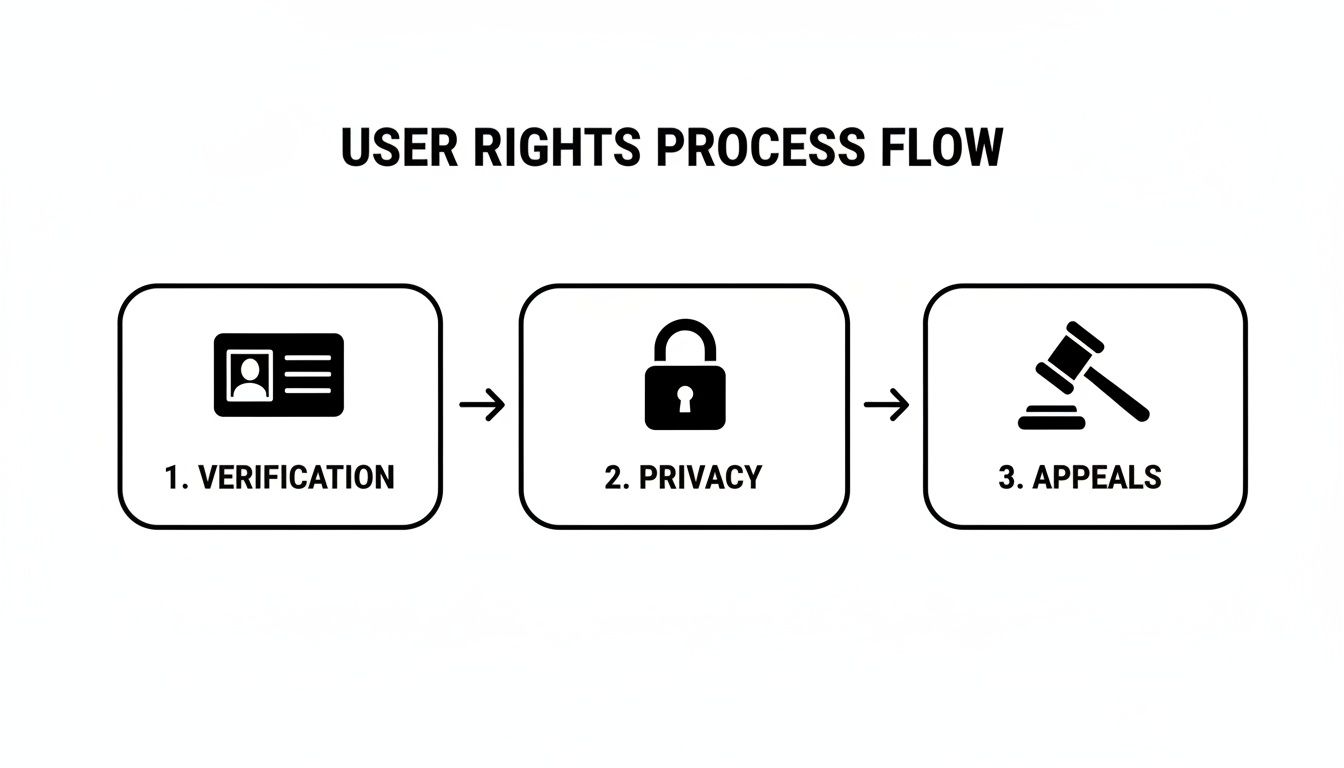

This flowchart maps out what a user-first safety process looks like, emphasizing their right to verification, privacy, and fair appeals.

As you can see, a system that users trust is one that balances strong safety protocols with a genuine respect for their rights at every turn.

A great moderation system is never "done." It evolves. It adapts to new threats, new user behaviors, and shifting community norms. The key to long-term success is constant monitoring and a willingness to refine your approach.

To put this into practice, you need a transparent appeals system that gives users a real chance to be heard if they think you made the wrong call. Finally, build your metrics dashboard. This is your command center, where you'll track everything from moderator accuracy and AI precision to how long it takes to review a piece of content. That data is gold—it’s what you’ll use to make every future improvement.

Frequently Asked Questions About UGC Moderation

Let's be honest: navigating user-generated content moderation is tough, especially in the adult space where the lines can feel incredibly blurry. If you're building a platform, you've probably wrestled with these questions. Here are some straight answers based on real-world experience.

How Do You Balance Safety with Free Expression?

This is the million-dollar question, isn't it? The trick is to stop thinking about your content policy as a list of "don'ts" and start seeing it as the foundation for creativity.

Your job is to define the hard lines—the absolute zero-tolerance stuff like illegal content, real-world harassment, or anything involving minors—and enforce them without mercy. When you do that, you're not restricting people. You're actually building a container where they feel safe enough to truly let go and explore everything else.

Think of it this way: when users trust you to handle the genuinely dangerous things, they feel much more comfortable and secure pushing creative and consensual boundaries. You’re not killing the vibe; you’re protecting it.

What’s the Best Way to Handle AI False Positives?

AI is a workhorse, but it's a blunt instrument. It's fast, but it completely lacks the context to understand nuance, especially in creative roleplay. A simple "no" can be a hard boundary or part of a consensual scene, and your AI will never know the difference.

This is where a strong human-in-the-loop system is non-negotiable.

When your AI flags something that isn't an obvious, slam-dunk violation, it should never trigger an automatic ban. Instead, that content needs to go straight into a queue for human eyes.

This hybrid approach stops your platform from punishing users for imaginative scenarios that an algorithm misinterpreted. It’s how you get the speed of automation without sacrificing the trust of your community.

How Can a Small Team Moderate a Growing Platform?

You can't hire your way out of a content explosion—it's a losing game. The only way for a small team to survive is to be smart and strategic. It all comes down to leverage and a tiered defense.

Here’s how you do it:

- Automate the Easy Stuff: Use AI to catch and block the low-hanging fruit. Think spam, phishing links, and known illegal media (using hashes). This clears out the high-volume noise so you can focus.

- Empower Your Community: Your users are your greatest asset. Build simple, clear reporting tools so they can flag content that breaks the rules. They’re your eyes and ears on the ground, and they can multiply your team's reach tenfold.

- Focus Your Experts: With the first two layers handling the bulk of the work, your highly-trained human moderators can dedicate their time to what they do best: handling the complex, sensitive cases that require real judgment.

This is how a tiny team can effectively manage a massive platform. It’s not about more people; it’s about a smarter workflow.

At Luvr AI, we're obsessed with creating a space where creativity can flourish because safety is a given. Want to see what that feels like? Discover how we balance user freedom with a robust safety-first approach. Create your own AI companion and experience the difference a well-moderated community makes at https://www.luvr.ai.